Table of Contents

1. The AI Moment in Regulatory Affairs

Artificial intelligence is no longer a future concept in regulatory and quality work. It already shapes how organizations draft, review, organize, and assess complex content throughout the product lifecycle. In GxP environments, productivity alone is insufficient. The key question is whether AI-enabled work withstands scrutiny: whether its intended use is defined, risks are understood, outputs are reviewable, and the overall process remains controlled.

That is the real shift now underway.

The conversation is moving from “Can we use AI?” to “Can we govern AI in a way that is inspection-ready?” Recent FDA and EMA activity makes that direction increasingly clear.

However, in a GxP-regulated environment, speed without control poses risk.

Regulatory agencies do not question whether to use AI, they evaluate how it is governed, validated, and controlled, especially when it contributes to content submitted to health authorities.

This is the inflection point:

AI in regulatory workflows must evolve from experimentation to inspection-ready execution.

2. What FDA & EMA Actually Say About AI

In January 2026, the U.S. Food and Drug Administration and European Medicines Agency jointly established 10 Guiding Principles of Good AI Practice (GxP-AI), a landmark step toward global alignment.

These principles are not theoretical; they define how AI must behave in regulated environments. Those principles emphasize:

Human-centric by design

Risk-based approach

Adherence to standards

Clear context of use

Multidisciplinary expertise

Data governance and documentation

Model design and development practices

Risk-based performance assessment

Life cycle management

Clear, essential information

These principles matter because regulators expect AI in regulated settings to be transparent, not a novelty or a substitute for human judgment. They call for disciplined AI use within a documented, risk-based, lifecycle-managed model.

FDA’s 2025 draft guidance on AI for regulatory decisions supports this, introducing a risk-based credibility assessment framework linked to a model’s context of use. AI should be judged by its purpose, importance, and evidence backing its outputs.

Europe’s draft Annex 22 adds a key signal but targets static, deterministic AI/ML in critical GMP cases. It states Generative AI and Large Language Models are excluded and must not be used in critical GMP applications. For non-critical GMP uses, qualified human oversight is still vital.

Regulators are not restricting AI; they are normalizing it under existing GxP principles.

Regulators are clear:

AI is acceptable only when it operates within validated, controlled, and auditable systems.

3. The Overlooked Issue: Validation Content Is Also Regulated Content

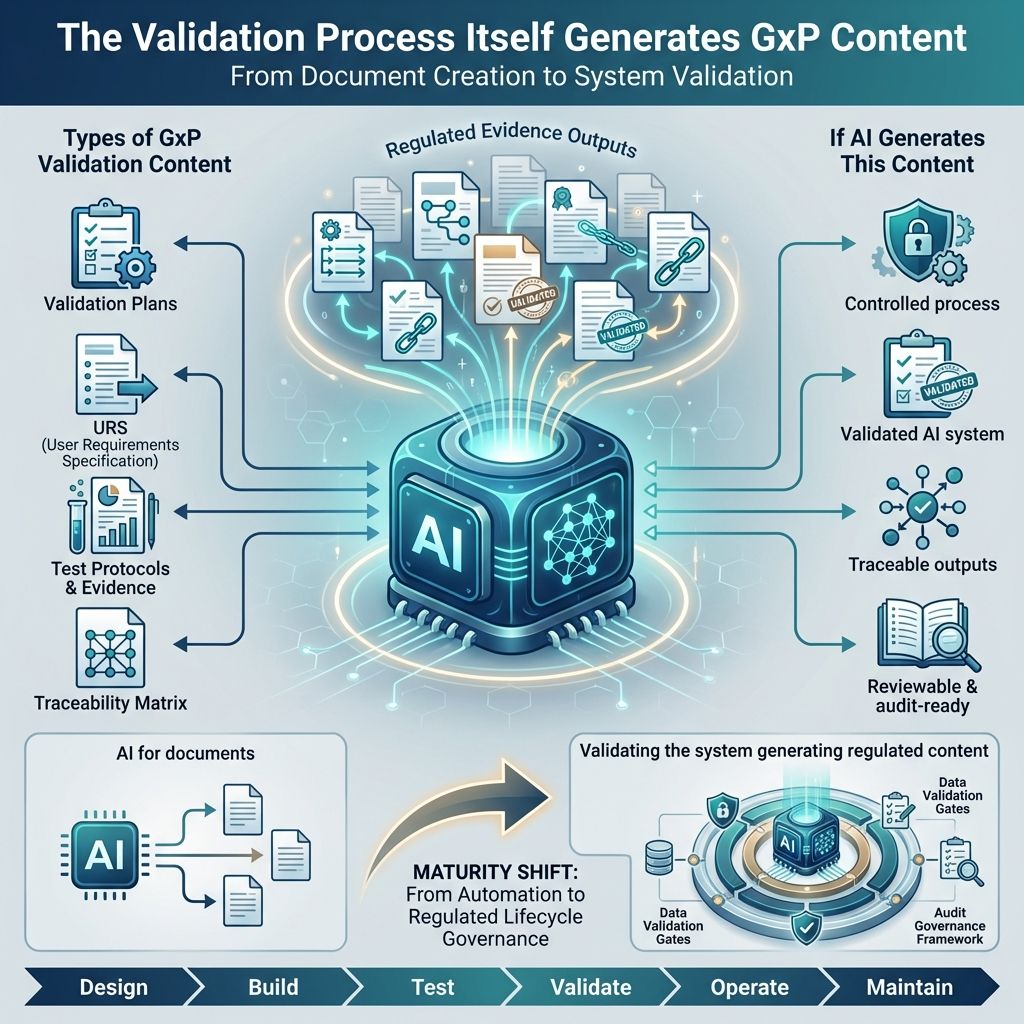

A critical but often overlooked reality:

“The validation process itself generates GxP content”

Validation plans, user requirements, test protocols, traceability matrices, execution records, deviation records, and approval history are not just internal files. They serve as documented evidence that a computerized system was properly implemented and controlled. They demonstrate compliance, consistency, and inspection readiness.

These are not just internal artifacts; they are inspection-critical evidence demonstrating system compliance.

If AI generates validation content, organizations must consider more than speed and drafting efficiency. They should ask:

What is the AI's intended role in this workflow?

What risks arise if the output is incorrect or incomplete?

What human review must occur before accepting the output?

What traceability links the source input to the generated artifact?

How are changes, approvals, and re-generation managed?

This shifts the focus from:

“Using AI for documents”

to

“Validating the system that generates regulated content”

4. Applying GxP-AI Principles to Validation: The cIV Approach

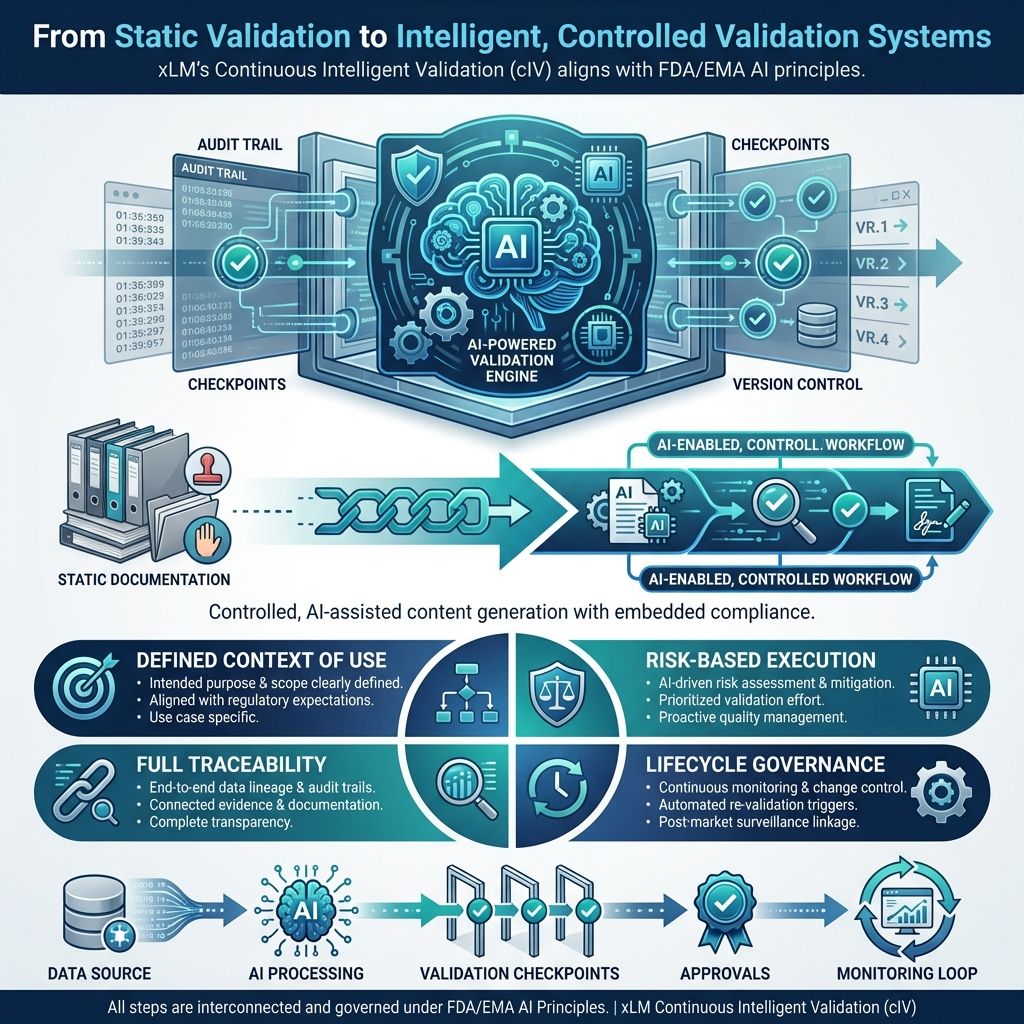

xLM’s Continuous Intelligent Validation (cIV) applies FDA/EMA AI principles directly to the validation lifecycle, transforming it into a structured, AI-enabled, compliant workflow.

Rather than positioning AI as an uncontrolled drafting engine, cIV can be framed as a structured, AI-assisted validation operating model where each task has a clear purpose, control scales with risk, and human review is embedded throughout the lifecycle. That is the right way to talk about it.

Instead of treating validation as a static documentation exercise, cIV operationalizes it as a:

Controlled, AI-assisted content generation system with embedded compliance

Every step in cIV aligns with GxP-AI expectations:

Defined context of use: Each AI-assisted step ties to a specific validation task, such as generating a draft URS, deriving test cases from approved requirements, or organizing traceability relationships.

Risk-scaled controls: Higher-impact outputs require stricter review, approval, and verification.

Traceability: Source inputs, generated outputs, edits, reviewer actions, and approvals remain in a controlled history.

Lifecycle governance: Changes to workflows, prompts, templates, logic, or model behavior follow change control.

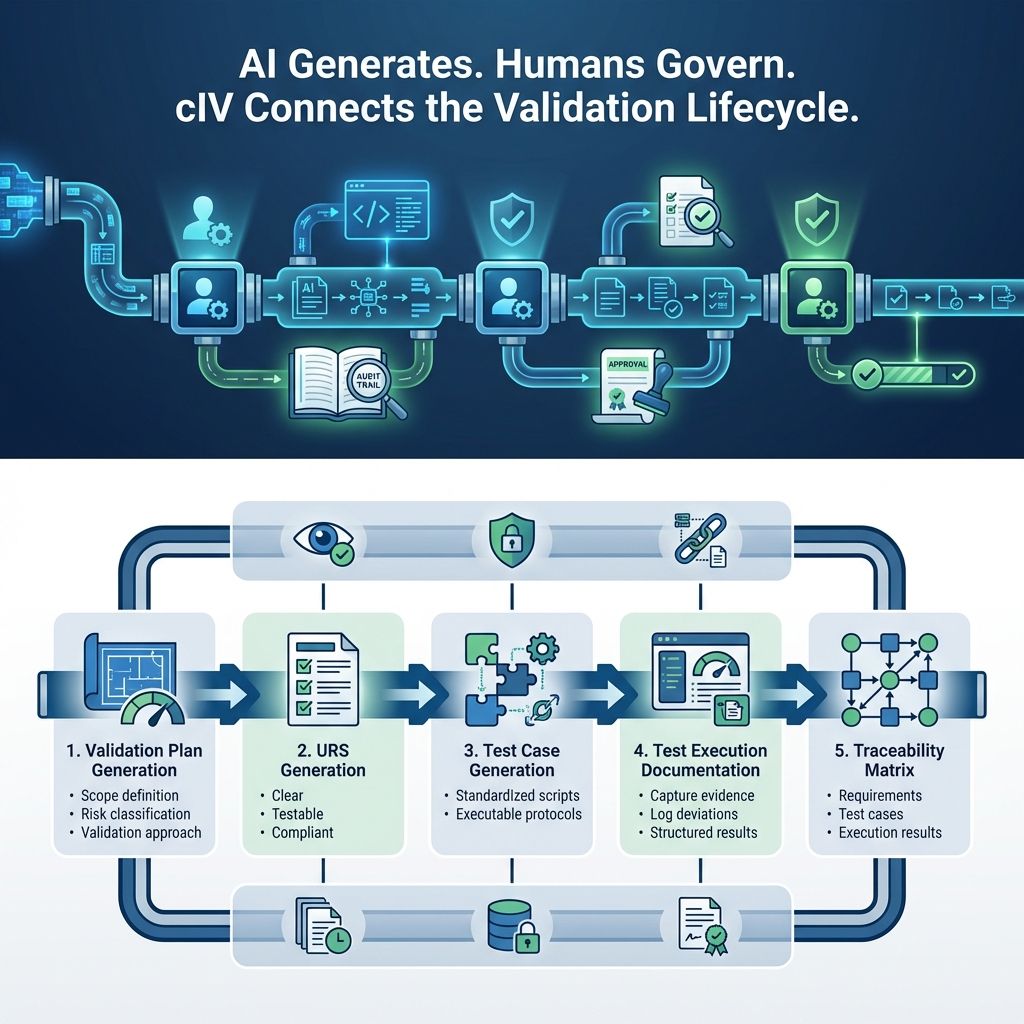

5. cIV Workflow: AI-Driven Validation Content Generation

cIV structures validation into interconnected stages where AI generates content and humans enforce compliance

1. Validation Plan Generation

AI generates a structured validation strategy, including:

Scope definition - Outline project boundaries to manage expectations and resources.

Risk classification - Categorize risks by type and severity to prioritize mitigation and allocate resources.

Validation approach - Define methods and criteria to verify outcomes meet standards and expectations.

This ensures consistency and aligns with regulatory expectations.

2. URS (User Requirements Specification)

AI converts inputs into a URS document that is:

Clear - Information is easy to understand, leaving no ambiguity.

Testable - Each requirement can be verified through tests or evaluations.

Compliant requirements - All comply with relevant standards, regulations, and guidelines.

This establishes a strong foundation for validation.

3. Test Case Generation

AI translates requirements into:

Standardized test scripts ensuring consistency and repeatability.

Executable validation protocols automating verification for accurate results.

This ensures completeness and reduces manual inconsistencies.

4. Test Execution Documentation

AI assists in:

Capturing execution evidence with details for verification and audits.

Logging deviations to document discrepancies for root cause analysis and resolution.

Structuring results clearly to analyze outcomes and support decisions.

All outputs are maintained in an audit-ready format

5. Traceability Matrix

AI ensures complete linkage across:

Requirements - Lists key functionalities and features to meet project goals.

Test cases - Verifies correct implementation with inputs, expected outputs, and test conditions.

Execution results - Shows test outcomes, noting passes, failures, and observations.

Providing full transparency for inspections.

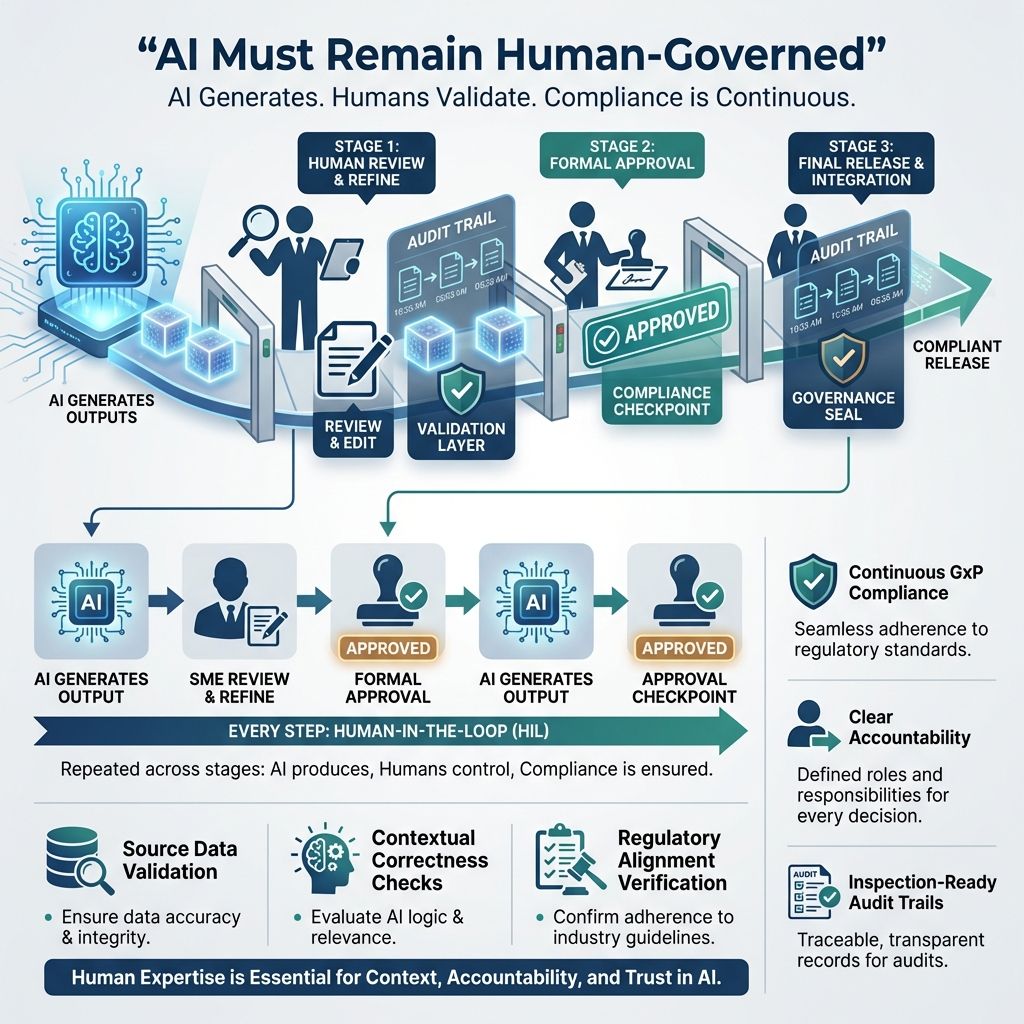

6. Human-in-the-Loop: Embedded at Every Step

FDA’s good AI practice principles include human-centric design and multidisciplinary expertise. The Annex 22 consultation draft also emphasizes qualified personnel, documentation, quality risk management, intended use, and human responsibility for outputs. A core FDA and EMA requirement is clear:

AI must remain human-governed

cIV embeds Human-in-the-Loop (HIL) as a mandatory control throughout the validation lifecycle.

At every stage:

AI generates output automatically based on input data and predefined algorithms.

A qualified Subject Matter Expert (SME) reviews and refines AI output to ensure accuracy and relevance.

Formal approval is recorded before further progression or implementation.

This ensures:

Continuous GxP compliance - Ongoing adherence to Good Practice rules ensures quality and safety across processes.

Clear accountability - Defining responsibilities helps team members manage compliance effectively.

Inspection-ready audit trails - Maintaining audit trails ensures clear, traceable records for audits.

Human oversight in cIV requires:

Validation of source data to ensure accuracy before processing.

Contextual correctness checks to confirm data is appropriate and relevant.

Verification of regulatory alignment to ensure compliance with laws and guidelines

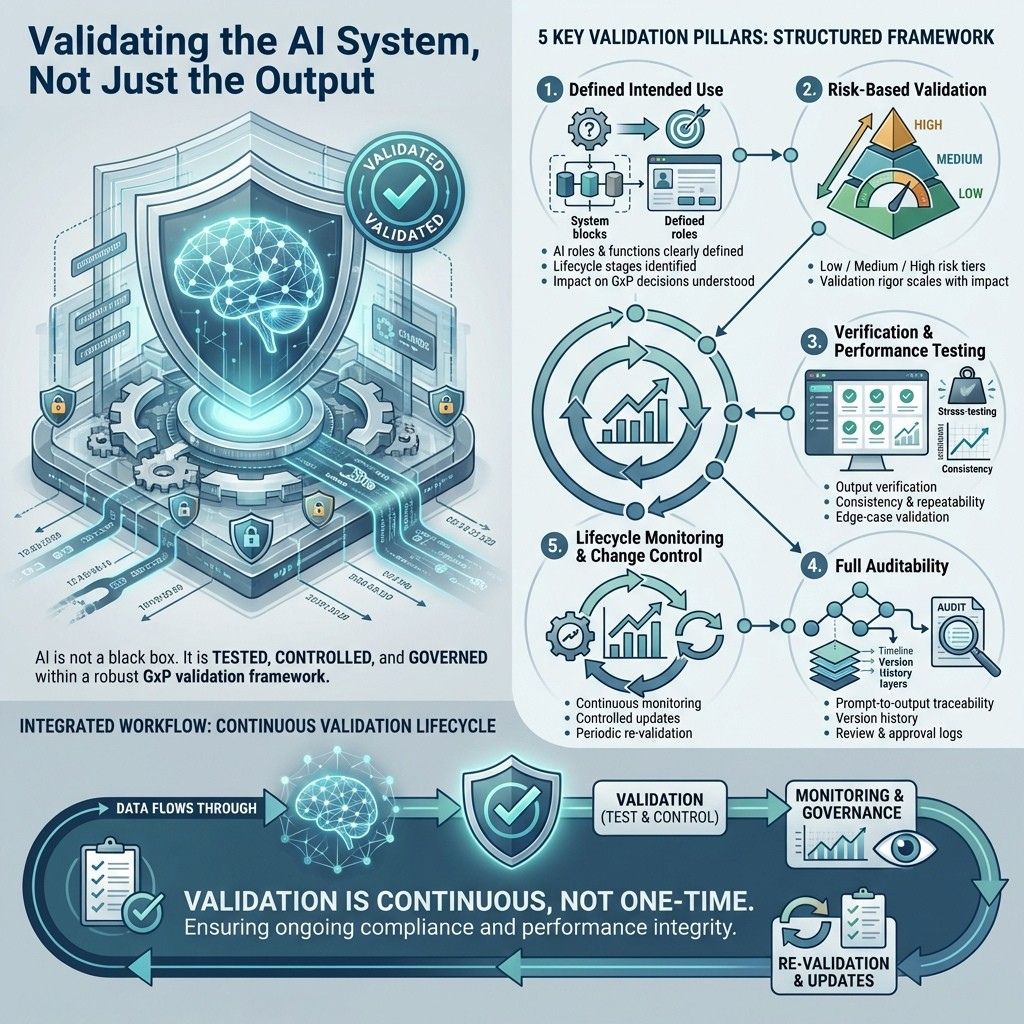

7. Validating cIV Itself: The System Behind the System

A key regulatory expectation is often overlooked:

If AI is used in GxP processes, the AI system itself must be validated

cIV addresses this directly.

1. Defined intended use

cIV clearly defines:

The specific functions and roles of each AI component within the system, highlighting their contributions and purposes.

The stages in the validation lifecycle where these AI components are used, including timing and context.

How these AI components influence decisions related to Good Practice (GxP) compliance, emphasizing their role in ensuring quality and regulatory adherence.

2. Risk-based validation of AI components

Each AI function is assessed by risk:

Low-risk tasks focus on formatting and organizing content with minimal judgment, aiming for clear, consistent structure.

Medium-risk tasks involve creating new content, requiring creativity and accuracy, but not affecting critical decisions.

High-risk tasks produce outputs that impact key decisions and require careful handling to avoid serious errors.

Validation rigor scales accordingly.

3. Verification & performance testing

cIV undergoes:

Output verification against expected results to ensure accuracy and correct system behavior.

Consistency and repeatability testing to confirm the system produces the same results under identical conditions.

Boundary and edge-case validation to check system handling of extreme or unusual inputs that might cause unexpected behavior.

4. Full auditability

The platform maintains:

Prompt-to-output traceability, allowing tracking and verification of the connection between initial inputs and generated outputs.

Version history, providing a detailed record of all changes and updates, enabling review of past versions and system evolution.

Review and approval logs, documenting evaluation and authorization of changes, ensuring accountability and transparency.

5. Lifecycle monitoring & change control

cIV ensures:

Continuous performance monitoring tracks system metrics over time to ensure efficient operation without degradation.

Controlled updates manage model changes carefully to maintain stability.

Periodic re-validation confirms the system remains accurate and reliable at set intervals.

This aligns directly with FDA/EMA expectations for lifecycle-driven AI governance.

8. Final Thoughts: From AI Adoption to Audit Defense

The most mature organizations will not win by using the most AI. They will win by using AI in a way that is governed, explainable, reviewable, and defendable.

Proper use of AI helps organizations reduce manual effort, improve consistency, accelerate validation cycles, and strengthen documentation discipline, but only within a controlled framework with defined intended use, risk-based oversight, documented review, and lifecycle governance.

By applying regulatory AI principles directly to the validation lifecycle, xLM with cIV shifts AI from a productivity tool to a compliance-enabling system.

This means organizations can:

Generate validation content faster without compromising compliance

Ensure every document is traceable, reviewable, and audit-ready

Maintain human accountability across all AI outputs

Validate not just the system under test but also the system generating validation evidence

The conversation is no longer:

“Can AI generate regulatory content?”

The real question is:

“Can you defend the system that generated it?”

FDA and EMA expectations are unequivocal:

AI must be controlled

AI must be transparent

AI must be validated

AI must remain human accountable

The organizations that will lead are not those that adopt AI the fastest, but those that implement it in a way that is:

Structured, governed, and inspection-ready by design